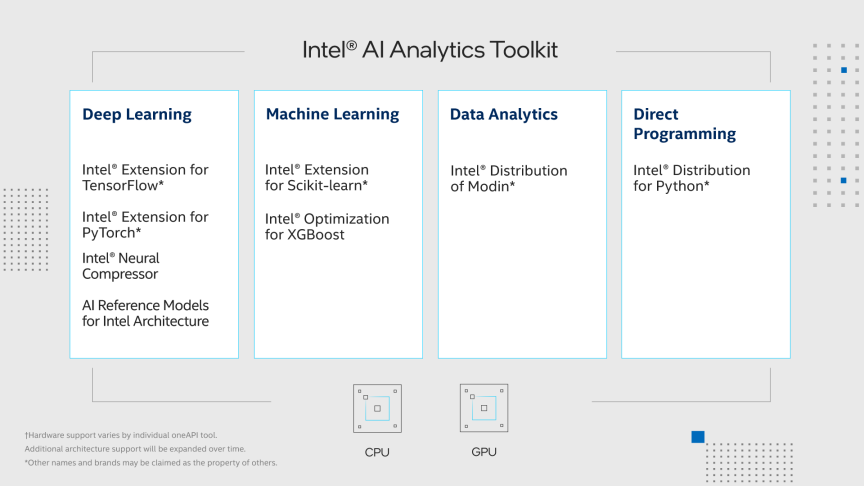

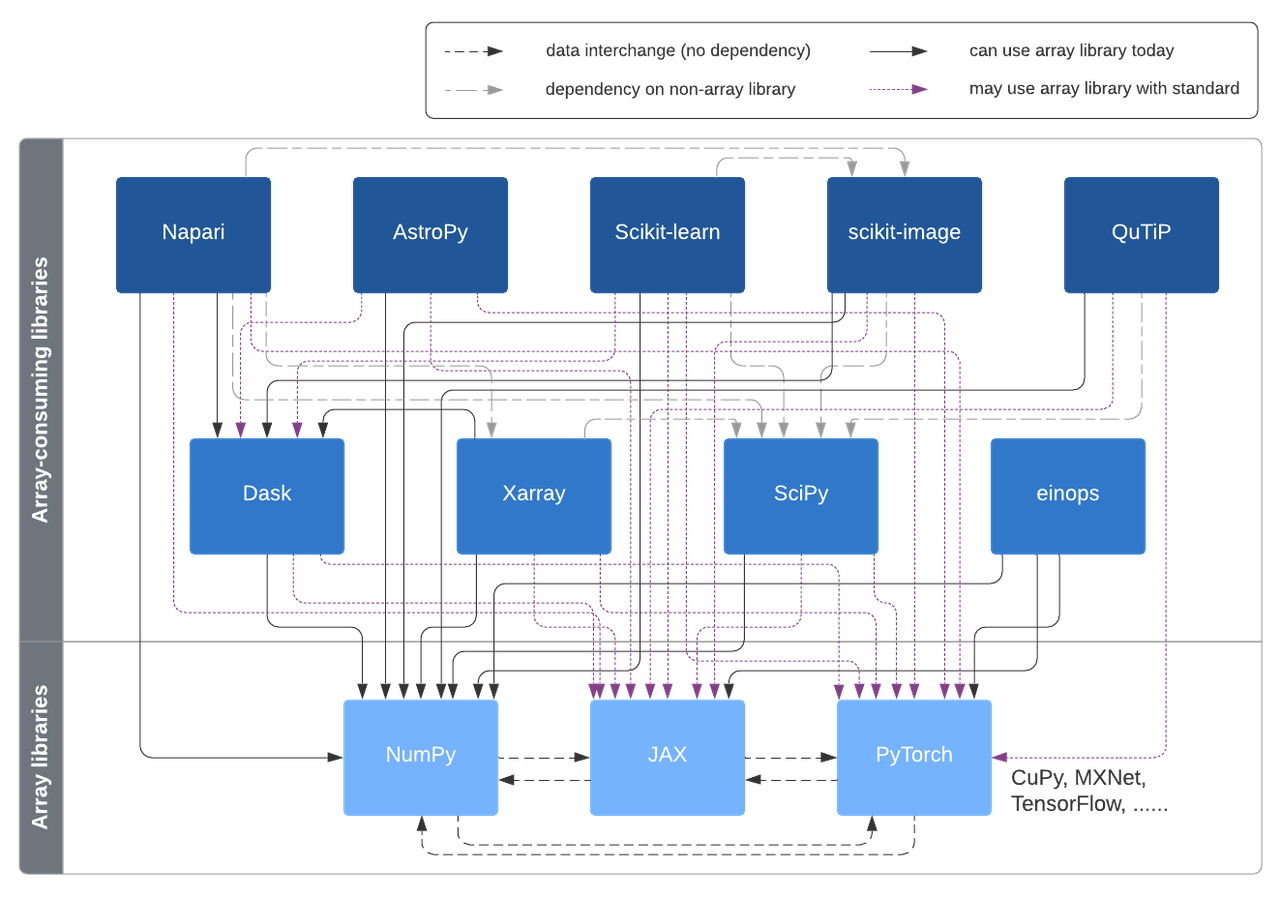

A vision for extensibility to GPU & distributed support for SciPy, scikit- learn, scikit-image and beyond | Quansight Labs

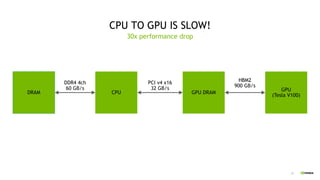

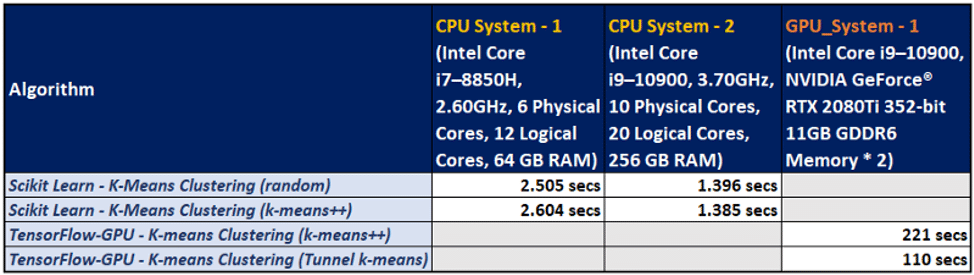

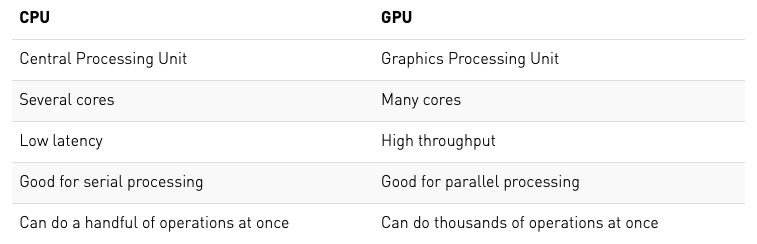

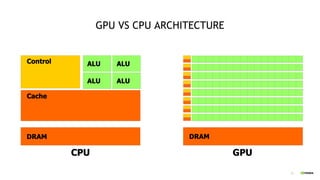

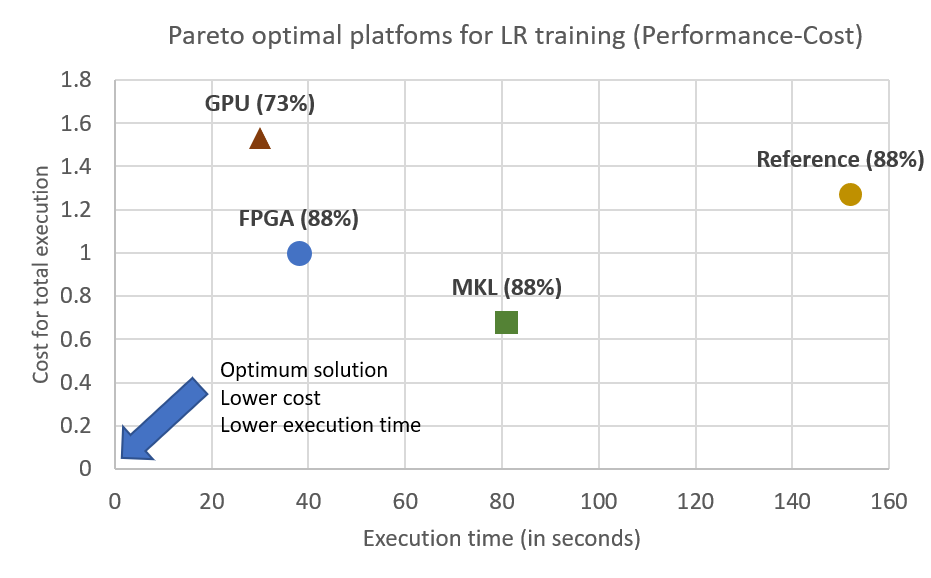

CPU, GPU or FPGA: Performance evaluation of cloud computing platforms for Machine Learning training – InAccel

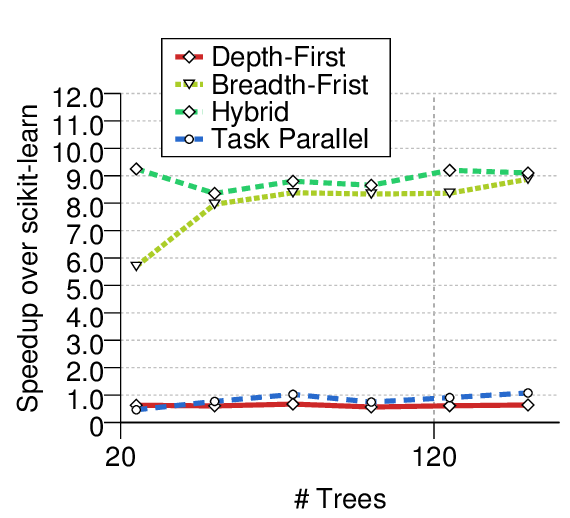

Speed up your scikit-learn modeling by 10–100X with just one line of code | by Buffy Hridoy | Bootcamp

Scoring latency for models with different tree counts and tree levels... | Download Scientific Diagram

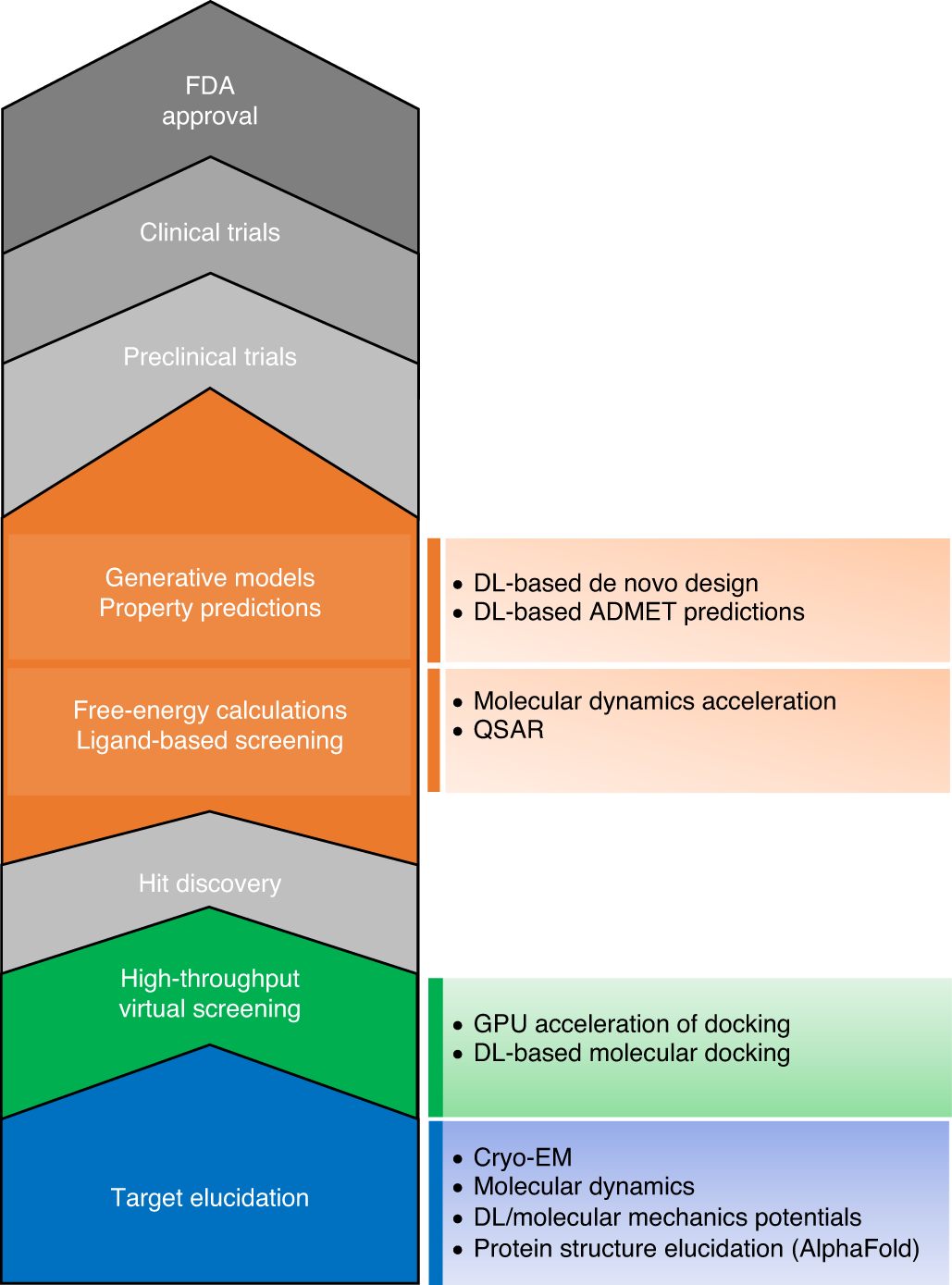

Machine Learning in Python: Main developments and technology trends in data science, machine learning, and artificial intelligence – arXiv Vanity

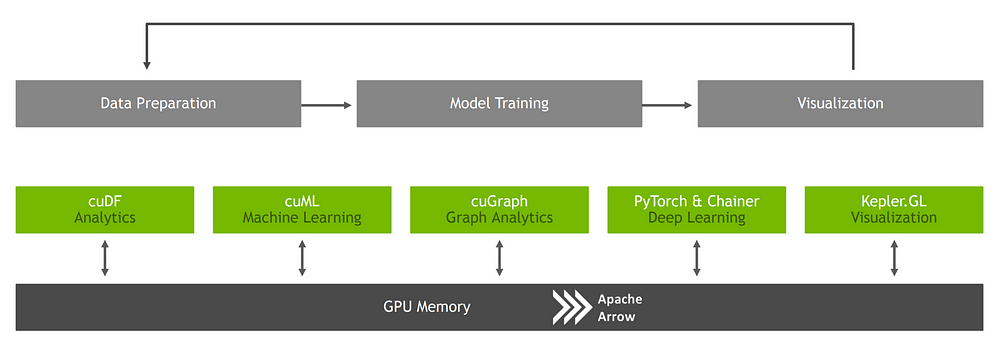

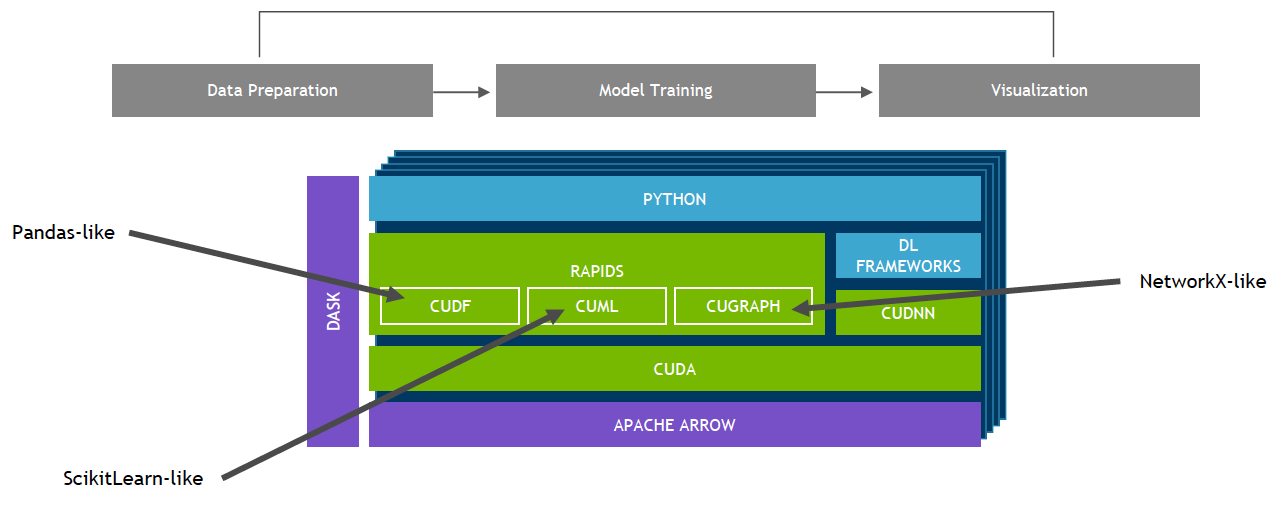

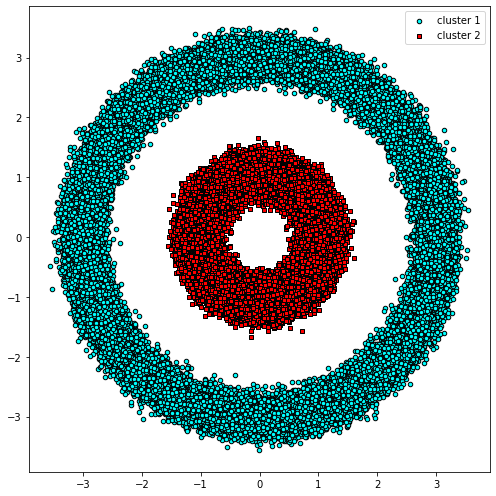

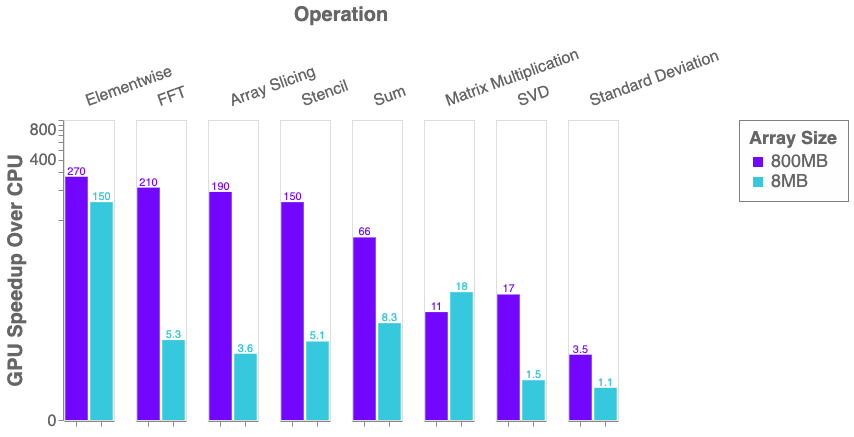

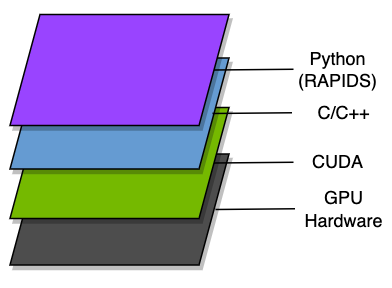

Python, Performance, and GPUs. A status update for using GPU… | by Matthew Rocklin | Towards Data Science

Speedup relative to scikit-learn over varying numbers of trees when... | Download Scientific Diagram

CPU, GPU or FPGA: Performance evaluation of cloud computing platforms for Machine Learning training – InAccel

Boosting Machine Learning Workflows with GPU-Accelerated Libraries | by João Felipe Guedes | Towards Data Science

H2O.ai Releases H2O4GPU, the Fastest Collection of GPU Algorithms on the Market, to Expedite Machine Learning in Python | H2O.ai