SOLVED: 1. Consider the Markov chain with three states, S-1,2,3, that has the following transition matrix: (0.6 0.3 0.1 P = 0.5 0.0 0.5 0.2 0.4 0.4 with initial distribution T (0.7;0.2;

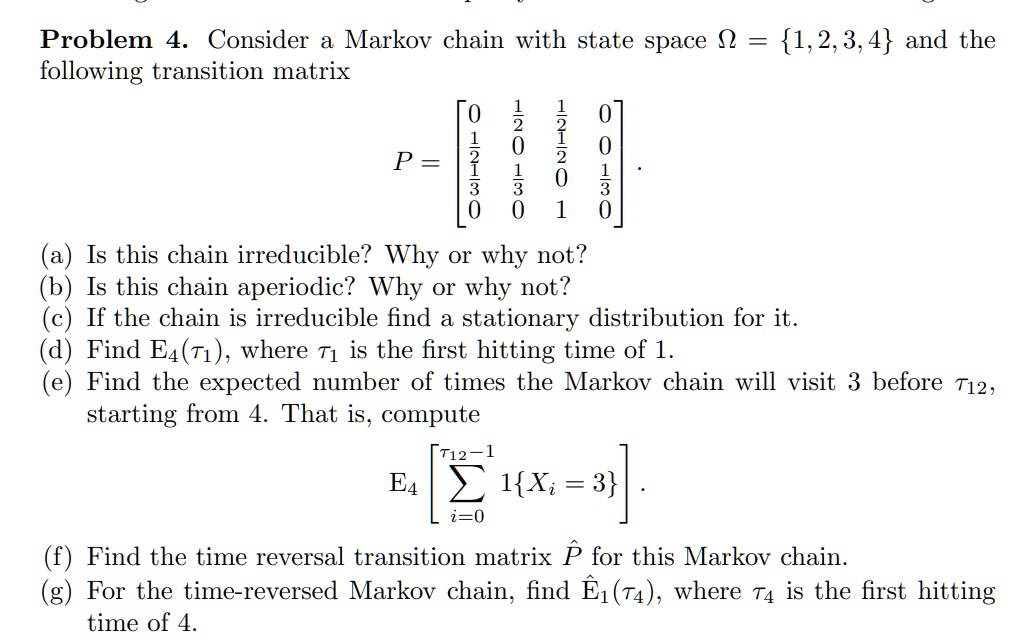

SOLVED: Problem 4. Consider Markov chain with state space n = 1,2,3,4 and the following transition matrix 0 0 6 J P 0 J 8 8 Is this chain irreducible? Why O

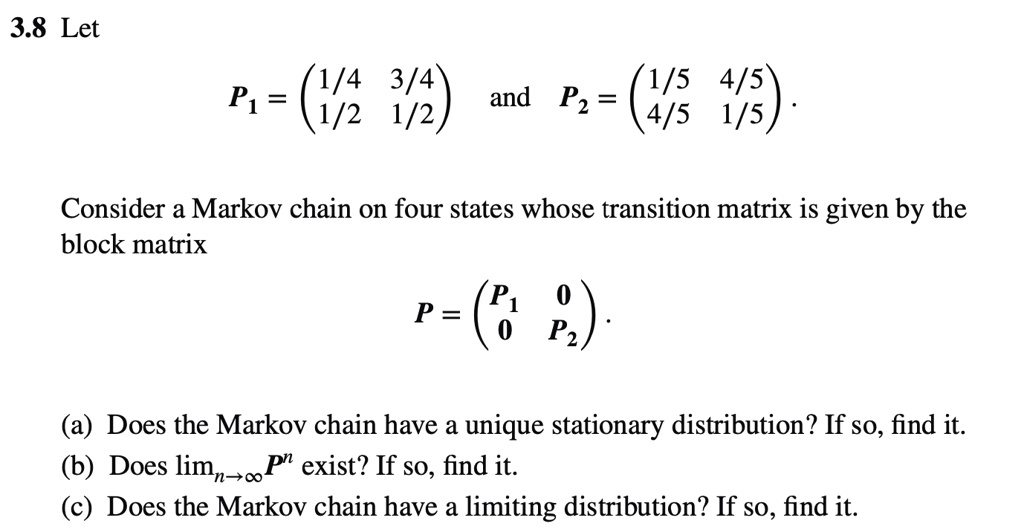

SOLVED: 3.8 Let (1/4 3/4 P1 = 1/2 1/2 (1/5 4/5 and Pz = 4/5 1/5 Consider a Markov chain on four states whose transition matrix is given by the block matrix

![SOLVED: Example 6: Find the stationary distribution of Markov chain in Example 4. 0.6 0. 0.5 03 0.2 0.4 0.4 0.2 Solution: Let V stationary distribution = [Vi Vz Vs] Fo.6 033 SOLVED: Example 6: Find the stationary distribution of Markov chain in Example 4. 0.6 0. 0.5 03 0.2 0.4 0.4 0.2 Solution: Let V stationary distribution = [Vi Vz Vs] Fo.6 033](https://cdn.numerade.com/ask_images/6ec5b37fecc54d94bfa846d2628c51dd.jpg)

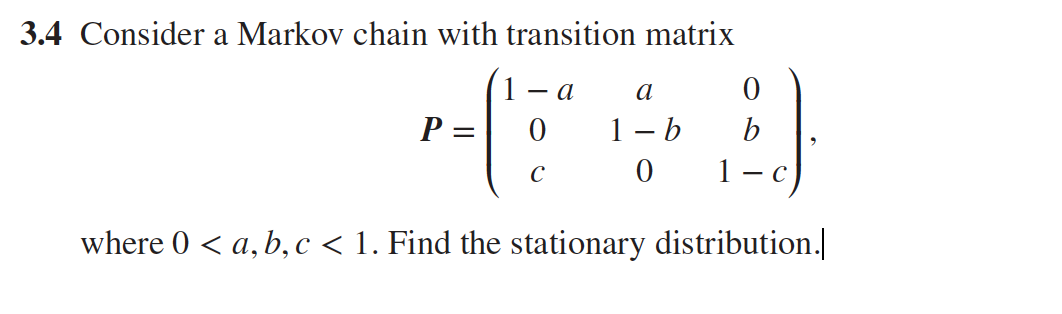

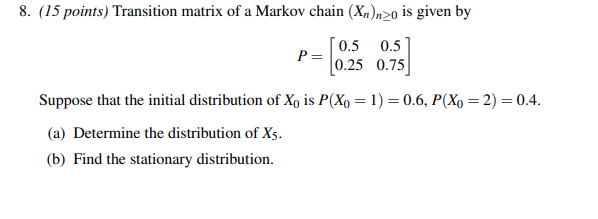

SOLVED: Example 6: Find the stationary distribution of Markov chain in Example 4. 0.6 0. 0.5 03 0.2 0.4 0.4 0.2 Solution: Let V stationary distribution = [Vi Vz Vs] Fo.6 033

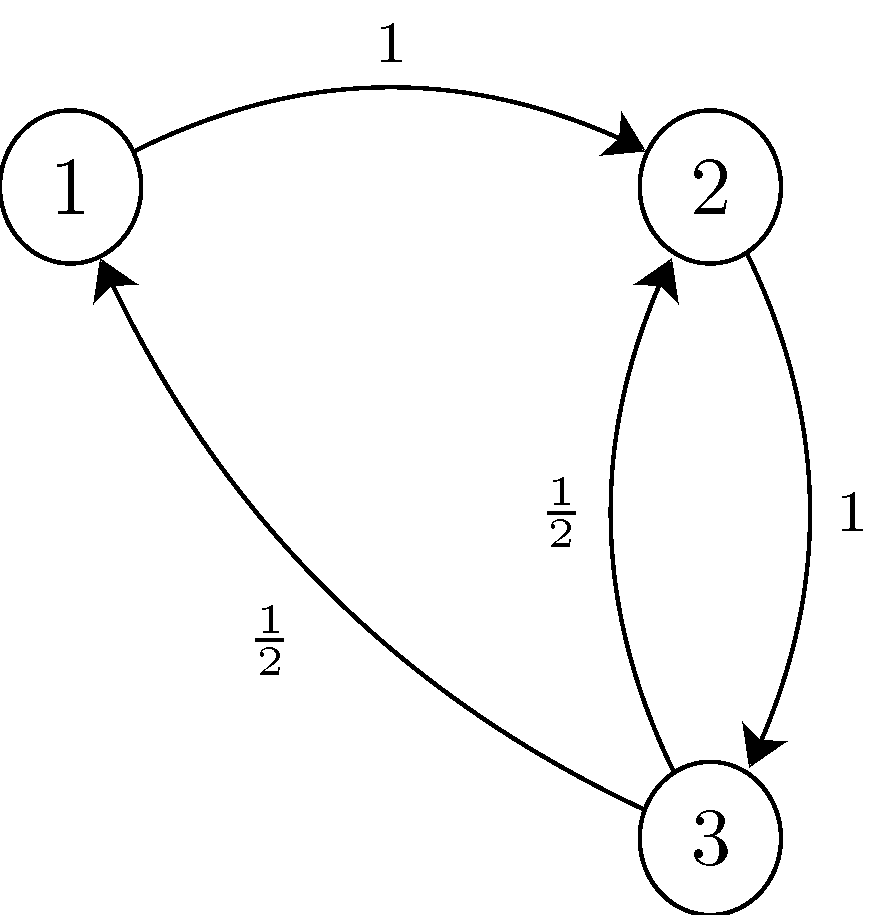

stochastic processes - Show that this Markov chain has infnitely many stationary distributions and give an example of one of them. - Mathematics Stack Exchange

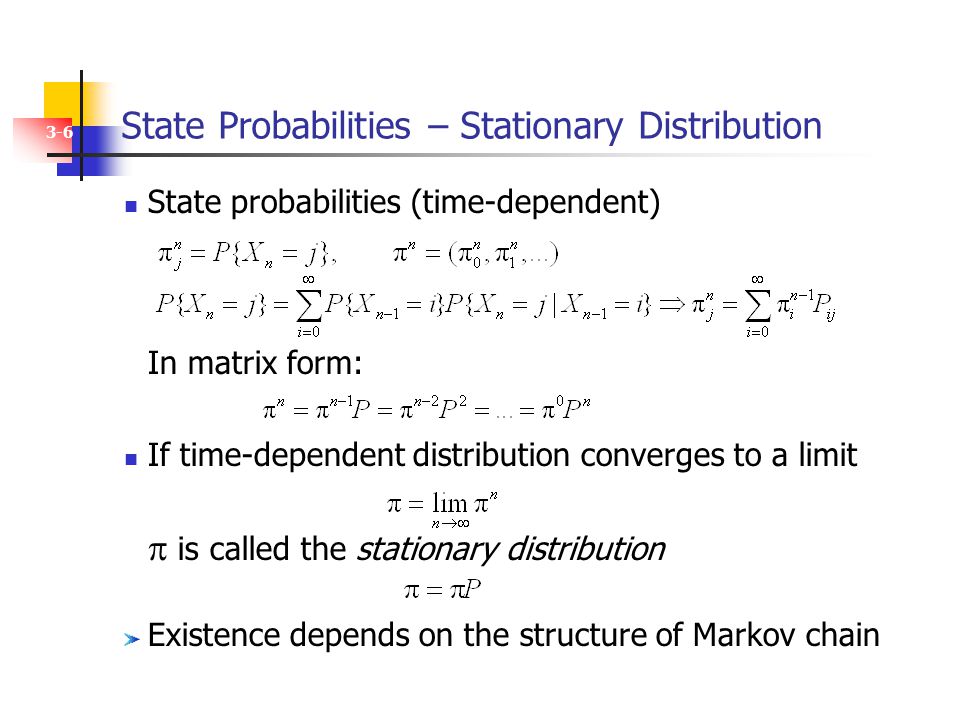

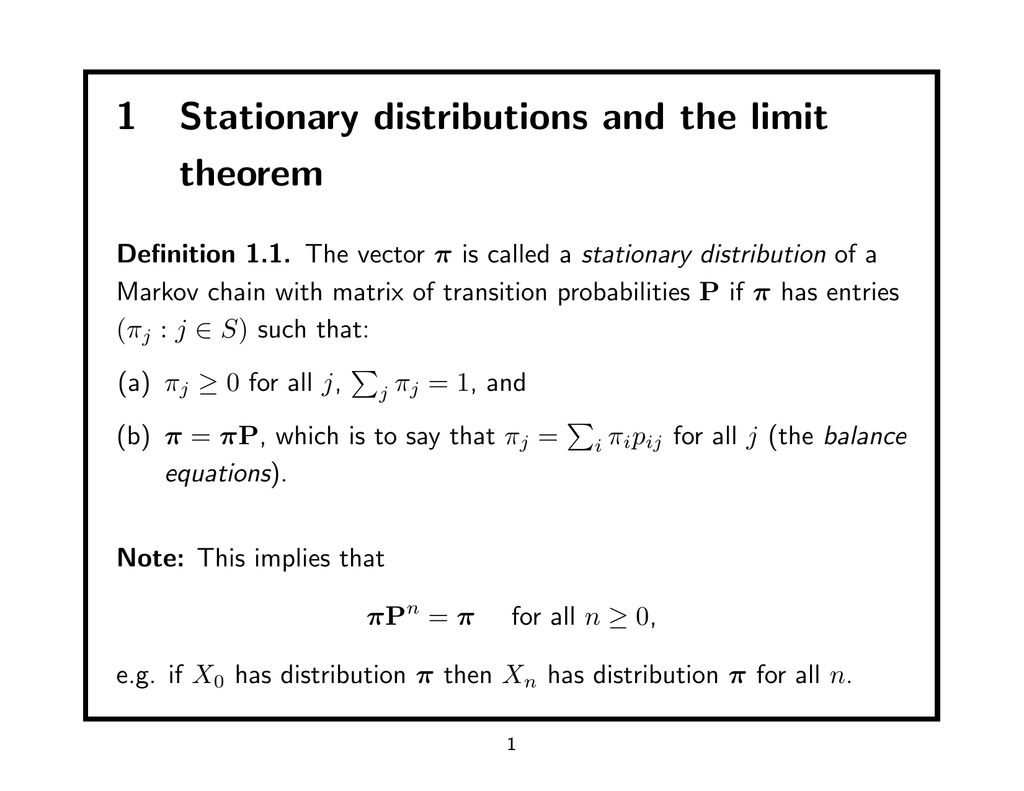

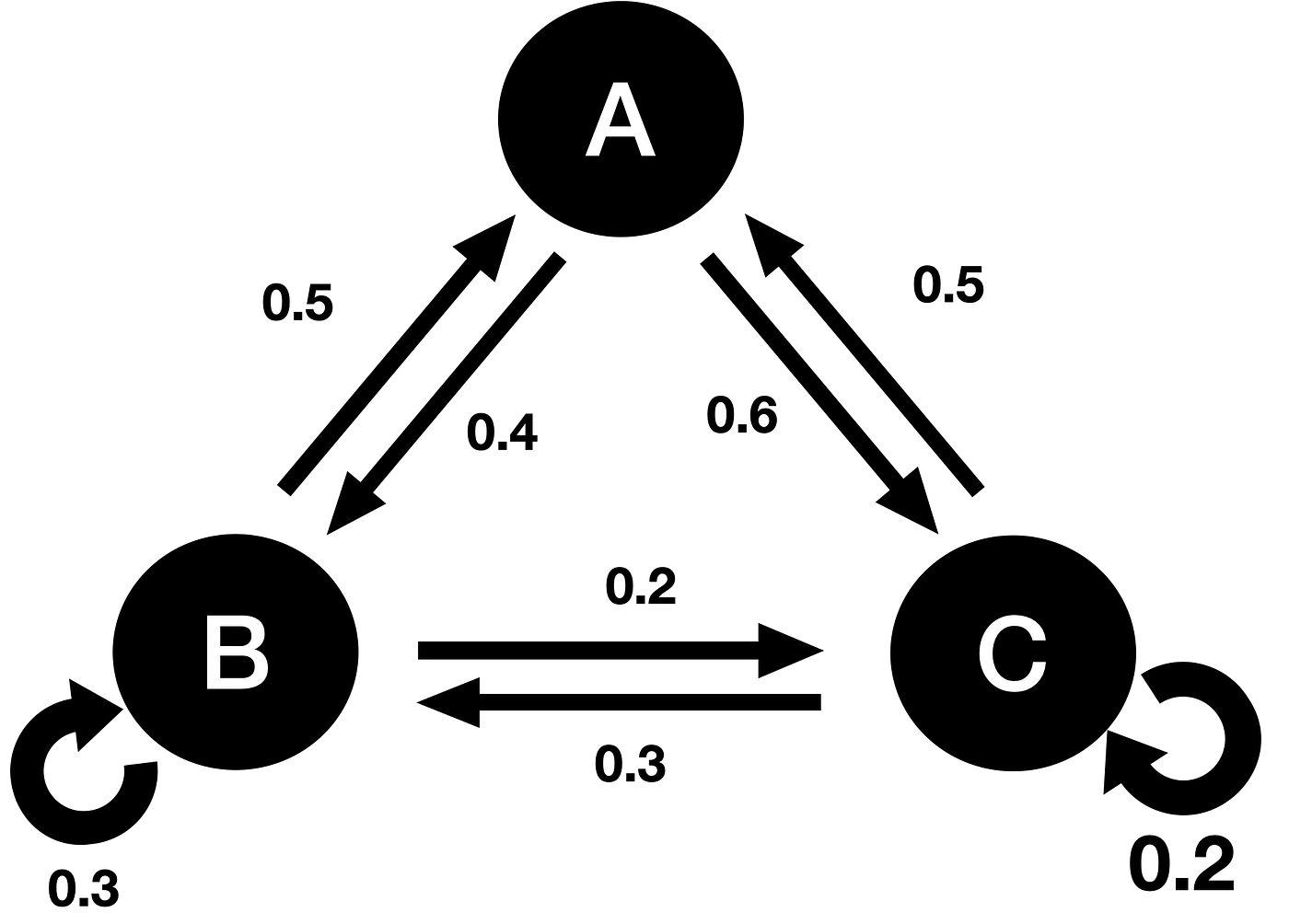

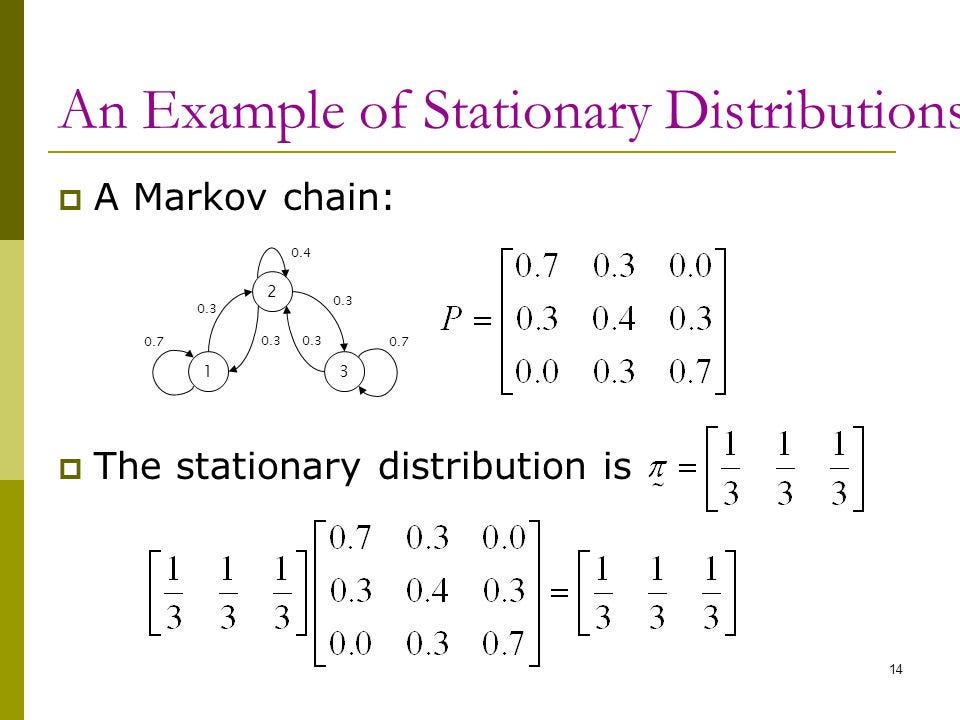

Please can someone help me to understand stationary distributions of Markov Chains? - Mathematics Stack Exchange

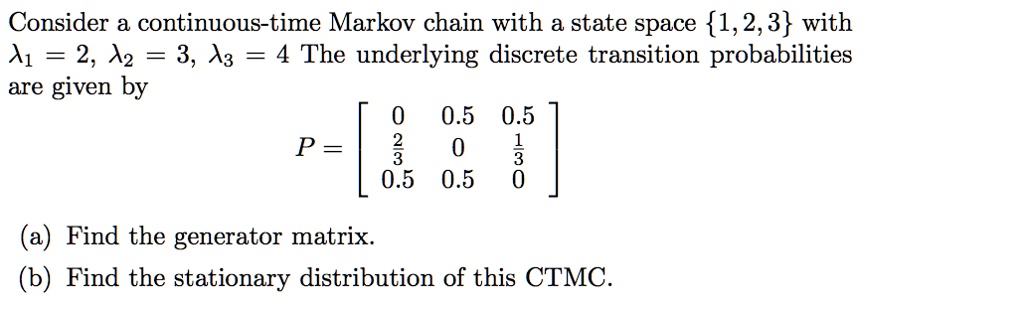

SOLVED: Consider continuous-time Markov chain with a state space 1,2,3 with A1 = 2, A2 = 3, A3 = 4 The underlying discrete transition probabilities are given by 0 0.5 0.5 P =

![CS 70] Markov Chains – Finding Stationary Distributions - YouTube CS 70] Markov Chains – Finding Stationary Distributions - YouTube](https://i.ytimg.com/vi/YIHSJR2iJrw/maxresdefault.jpg)